Deep Reinforcement Learning

Leverage the power of reinforcement learning to build your next AI solution

One of the key limitations of deep learning-powered artificial intelligence is that deep neural networks (DNN) require a large amount of labeled data to be trained. Deep Reinforcement Learning uses self-learning algorithms on simulators to bypass this labeled data limitation and truly embrace the benefits of DNN-powered AI agents.

Labeled data, i.e., data that a human has created, validated, or tagged, is expensive to source, error-prone at scale, and in certain situations not even available. For instance, training a speech recognition AI model requires hundreds of hours of human-captioned audio before being remotely useable. Similarly, a machine translation model from language A to B may need a couple of million human-translated sentences before reaching an appropriate quality level. Therefore, in most industries, accessibility to this labeled data is often a showstopper for most real-life applications that target a company-specific need.

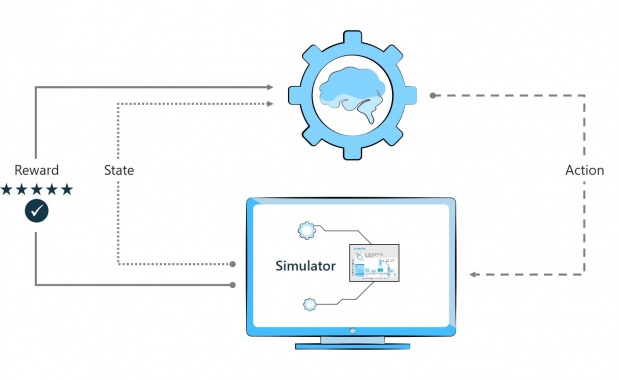

Reinforcement learning enables companies to go around these intrinsic AI training limitations by training the model not on labeled data but by letting it self-learn through trial and error. However, as it is not possible to let the AI run hundred of thousands or millions of tests on a live device or process, the first step to reinforcement learning is to build a reliable simulator.

Another element often hampering real-life use of custom AI is the complexity associated with defining the most appropriate architecture for a given problem. In fact, devising the best DNN architecture for a given problem is usually a task left to PhDs and often leads to peer-reviewed scientific publications.

To circumvent this challenge, machine teaching leverages human experience-based heuristics to build architectures that – to a certain extent- help break the black-box aspect of AI models.

Benefits

Advanced simulations

Self-trained AI through reinforcement learning

Human augmentation with AI or AI supervision by humans

Machine teaching

Machine teaching is the approach that leverages human experience and its associated heuristics to create more explainable AI. It creates architectures beyond the AI black box approach by using smaller and more constrained models that focus on solving one identifiable challenge.

For instance, instead of a single model that uses IR imaging to control an industrial oven, two sequential and explainable models could be used. The first one would be an AI that would estimate a product temperature based on IR imaging. In contrast, the second one is a control system that would modify input parameters based on the first model’s temperature.

With machine teaching, process specialists can build AI-powered control systems that will leverage their expertise effectively without the need to become not AI experts. Check out these cool animated demos from Microsoft to see examples of machine teaching use-cases.

Deep Reinforcement Learning with Azure ML

Using Azure Machine Learning and with the help of our data scientists et can enable non-AI experts to build reinforcement learning-based AI solutions for numerous business processes such as manufacturing process control.

The AI agent uses input from a simulator to train its various components. Each component is defined based on human expertise and the associated heuristics.

Once trained, the AI Agent can be used in one of two ways:

- Human augmentation: the AI agent will proactively offer the operator what is calculated as the best possible option. This can significantly increase operator effectiveness, reduce quality issues, and avoid downtime. For instance, an AI Agent could warn an operator of potential failure to ensure that the equipment is maintained or repaired pro-actively before it impacts production.

- Human supervised AI control: The Ai agent will effectively take control of the process, and the human will then be responsible for monitoring and controlling the AI. A typical example of this type of situation outside of manufacturing is self-driving cars, where drivers must always be ready to take over if needed but can just rely on self-driving for 99%+ of the time.

Advanced process simulation

Without realistic process simulators, AI agents cannot be trained as reinforcement learning-based AI training cannot be performed, in most real-life situations, on actual live processes.

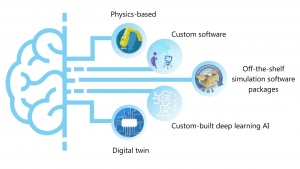

These simulators come in various shapes and complexity levels. There five core strategies possible to build a simulator that can be used for DRL training of an AI agent are:

-

- Physics-based models

- Custom software

- Off-the-shelves simulation platforms such as Anylogic or Matlab Simulink

- Custom deep-learning-based simulators

- In some cases, OEM supplied digital twins (learn more about the differences and similarities between those and simulators in this blog post)

To learn more about simulation strategies for Autonomous Systems check out this blogpost.